Reading notes: Deep Delta Learning 🧠

2026.01.03

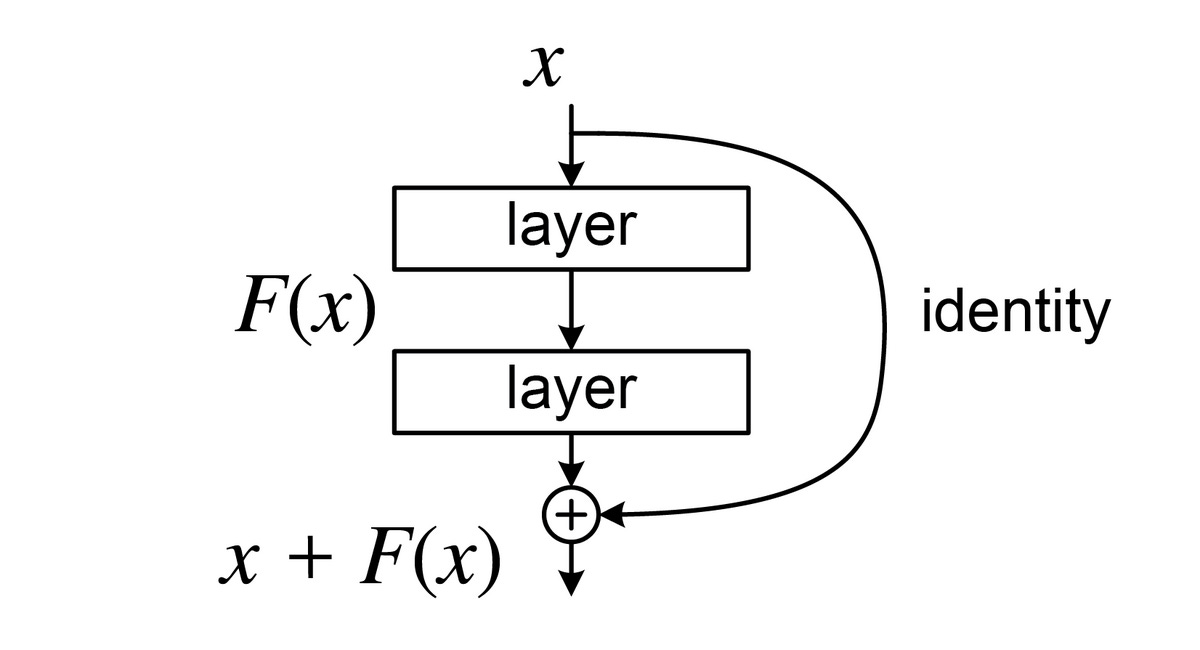

Deep neural networks became powerful largely because of residual connections. These connections let information flow through many layers without breaking training. But there is a hidden limitation: residual connections can only add information. They cannot remove or undo what was added before.

This post explains a paper called Deep Delta Learning, which introduces a small but important change. The change allows a network to keep, erase, or even reverse information as it goes deeper — something classic networks cannot do.

Audio summary by NotebookLM

1. Why classic ResNets hit a ceiling

When neural networks started getting very deep, training quickly became unstable. As signals passed through many layers, gradients weakened and useful information was lost. Models either failed to converge or learned very slowly.

Residual connections solved this problem.

Instead of forcing each layer to completely rewrite its input, a residual layer keeps the original representation and adds a small update on top of it. In its simplest form, the operation looks like:

output = input + small update

This design allows information to pass through unchanged when needed, making depth much safer. Because of this, ResNet-style architectures are now a standard component of modern deep learning models.

At the same time, this structure imposes a constraint. A residual layer can refine or extend what came before, but it cannot directly remove or undo earlier features. As networks grow deeper, this restriction becomes more noticeable.

2. The core idea: learning how to change the shortcut

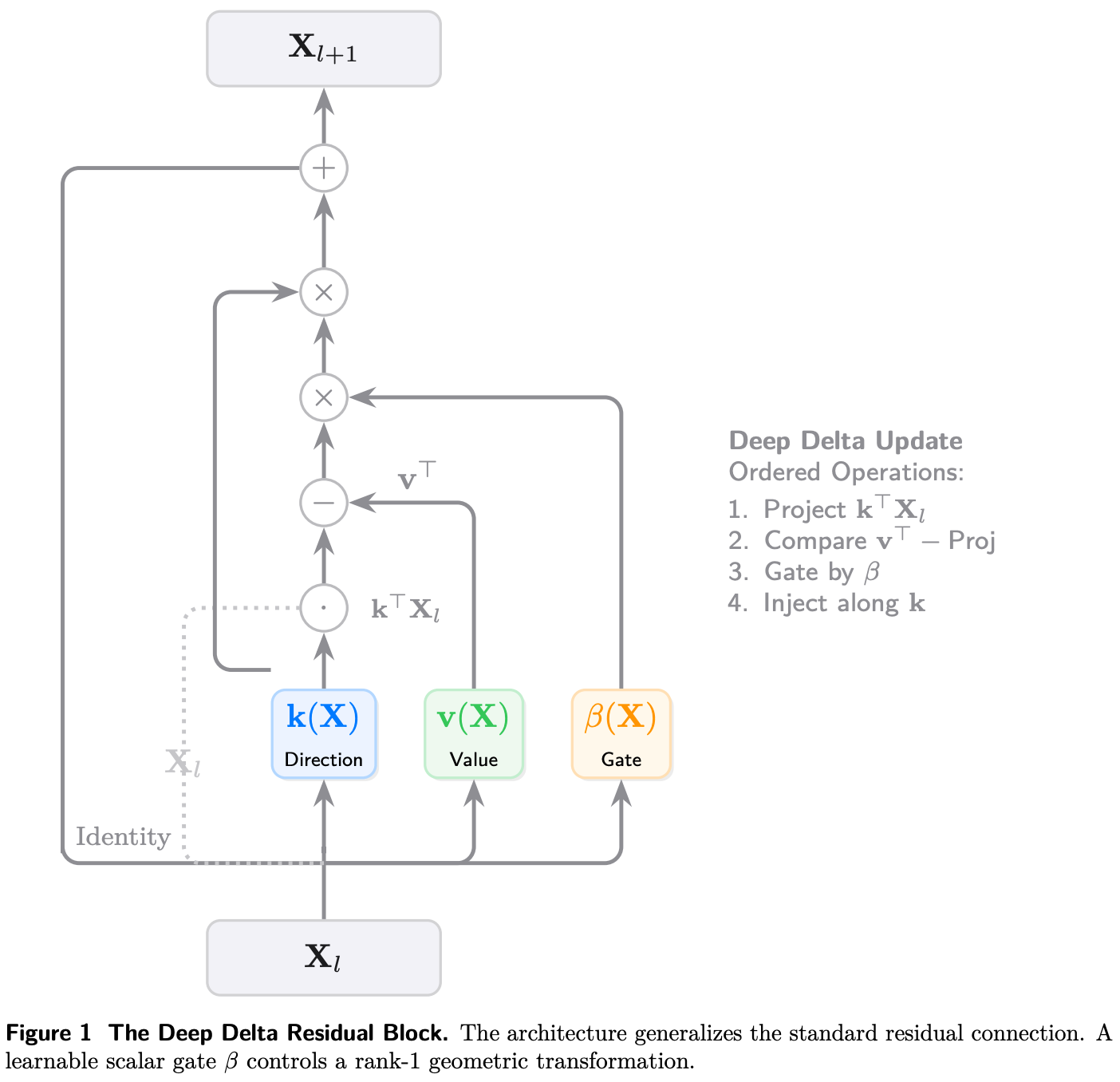

Deep Delta Learning keeps the same overall structure as a ResNet, but changes how the shortcut works.

In a standard ResNet, the shortcut always copies the input forward. In Deep Delta Learning, the shortcut becomes learnable. The model learns a single number that controls how strongly the old information should be kept or modified.

This means the shortcut is no longer passive. Instead of blindly copying information forward, it becomes part of the learning process. The network can now decide how much it trusts what came before.

This change is very small, but it gives the model much more flexibility.

3. One gate, three behaviors

That single learned number controls how the layer behaves.

When the value is close to zero, the layer behaves like a normal ResNet. Most information passes through unchanged. This keeps training safe and stable.

When the value is around one, the layer removes part of the old information and replaces it with new information. This allows the model to rewrite features that are no longer useful.

When the value gets larger, the layer can strongly correct the representation. Instead of gently adjusting features, it can cancel or reverse them. This makes it possible to fix mistakes made earlier in the network.

All of these behaviors come from the same simple mechanism. The model learns when each behavior is needed.

4. Why this matters for learning

In classic deep networks, information is only added as depth increases. Nothing is ever removed. Over time, this can lead to noisy or outdated features piling up.

Deep Delta Learning changes this. Because layers can now remove or correct information, the network can fix itself as it goes deeper. It becomes easier to represent patterns that change over time, cancel each other out, or move in opposite directions.

Importantly, this does not make training unstable. If learning the control value is difficult, the model can naturally fall back to standard residual behavior. Stability is preserved.

5. A deeper connection to memory and learning

There is another way to think about Deep Delta Learning. The update rule is similar to how memory works in modern neural networks. Each layer decides what to forget and what to keep, using the same control signal. Instead of just stacking transformations, the network is continuously updating an internal state.

From this perspective, depth is not just a sequence of layers. It is a sequence of edits to memory. The network is not only learning new features—it is revising its understanding as it goes deeper.

Residual connections made deep learning stable by allowing information to flow forward unchanged. Deep Delta Learning keeps that stability but removes an important restriction.

By allowing networks to erase, replace, or correct information, depth becomes something the model can actively control, not just accumulate.