Reading Notes: Recursive Language Models 🚀

2026.01.04

Audio summary by NotebookLM

The full paper is now available here: https://arxiv.org/abs/2512.24601v1.

Official codebase for Recursive Language Models (RLMs) here: https://github.com/alexzhang13/rlm

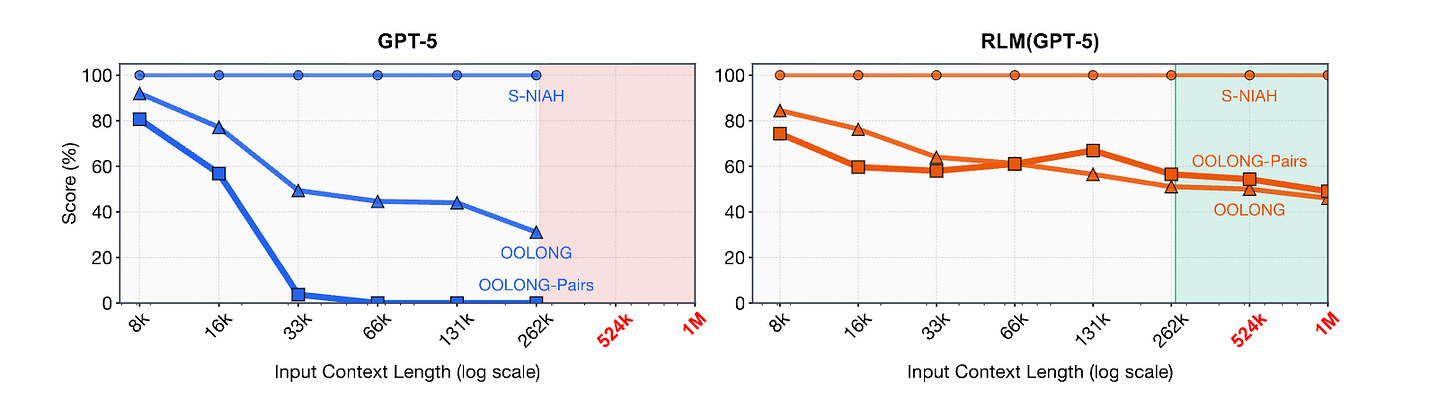

Imagine asking an AI to read an entire library and answer detailed questions about it. Traditional large language models often have trouble with very long documents because they can only see a limited number of words at a time. The Recursive Language Models (RLMs) paper introduces a fresh new approach. Instead of trying to hold all the context in memory at once, it allows the model to explore large texts by calling itself and smaller sub-calls over and over again. This way, it can handle almost unlimited amounts of text with better accuracy, lower costs, and more in-depth reasoning.

1. The Long Context Challenge in Language Models

Language models like GPT work within a certain context window, which means they can only handle a limited amount of tokens, words, and symbols at a time. If you try to input more text than this, the model might struggle, as it starts to forget earlier parts of the text, something often called context rot. Traditional methods attempt to solve this by summarizing or cutting down the text, but unfortunately, they can sometimes forget crucial details.

2. What Is a Recursive Language Model?

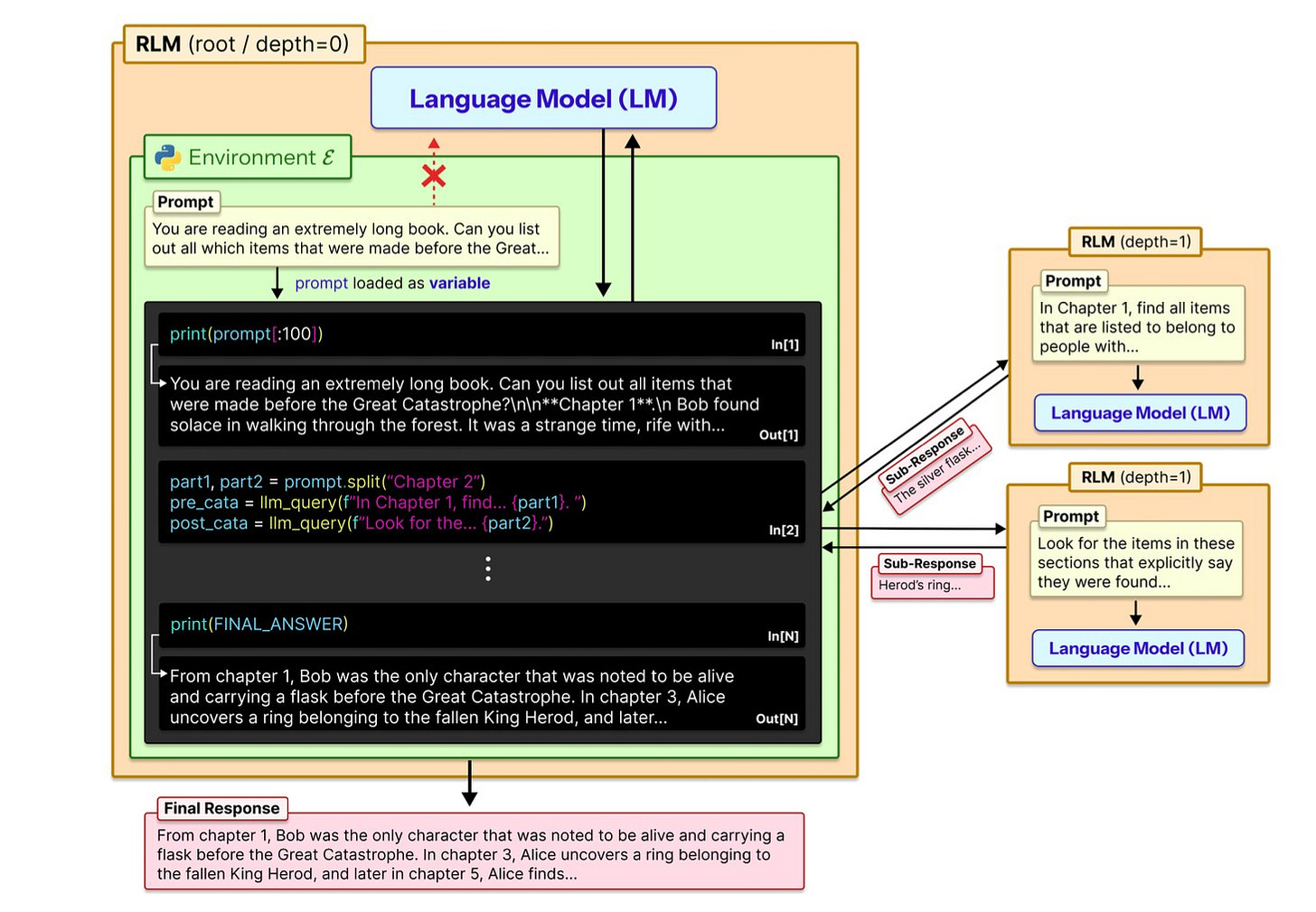

RLMs extend the idea of a language model call by embedding it within a programmable environment (typically a Python REPL). This environment stores the entire long input text externally and lets the model:

Read parts of the text into memory.

Write and execute commands (like Python code) to inspect and manipulate that text.

Recursively call itself or other model instances on sub-segments of text.

The main idea is to view the whole prompt not as one big block of tokens for just a single pass, but as a well-organized space where the AI can pose focused sub-questions, examine particular parts of the text, and then bring results together afterward.

3. RLM Architecture: How it Works Under the Hood

At its core, RLM is a wrapper around an existing language model. Calling an RLM looks like calling a regular model: you send in your question and the context, and you receive an answer. However, inside the wrapper:

The root model receives the user prompt and accesses the environment with the long context stored externally.

The model programmatically decides how to split the task, which parts of text to read, and when to make recursive sub-calls to itself.

Each recursive call can fetch, analyze, or summarize specific segments, and then return structured insights back to the root.

Since each call handles just a friendly slice of the conversation, there's no single step overwhelmed by millions of tokens. The environment does a great job of keeping everything neatly organized.

4. Performance and Benchmarks

The paper evaluates RLMs on several long-context reasoning tasks where conventional models either fail or degrade rapidly. Important observations include:

On long-context benchmarks like OOLONG and newly created tasks, RLMs dramatically outperform base models by large margins. One early report noted a small model using RLM logic beating a much larger base model at scale.

Performance remains stable even with millions of tokens at inference, whereas standard models either fail or become prohibitively expensive.

Cost per query is comparable to or lower than traditional approaches due to reduced redundant computation.

These results suggest the RLM strategy is a viable new paradigm for scaling AI reasoning with huge context.

5. Why This Matters and What’s Next

Recursive Language Models represent a shift in how AI systems handle context:

They reflect a procedural reasoning mindset, where the model acts more like a researcher inspecting and querying a database than a student trying to memorize every detail at once.

They point toward future systems that can process entire books, scientific corpora, legal databases, or multi-document inputs without losing detail or context.

This scalable reasoning opens doors for advanced AI agents that can plan, debug, and synthesize knowledge over long horizons.

Rather than relying solely on bigger context windows or more tokens, RLMs show that smart structuring and recursion can unlock new capabilities with existing models and tools.